By Dr. Deepak Kumar Sahu, Founder & CEO- FaceOff Technologies Inc.

Federated Learning (FL) in the US banking sector is gaining traction, with some institutions actively exploring in major US banks that have fully adopted and deployed it for their main operations.

Federated learning offers a powerful solution for collaborative AI model training without compromising privacy and confidentiality. Instead of requiring financial institutions to pool their sensitive data, the model training occurs within financial institutions on decentralized data.

To address this, Google Cloud is collaborating with Swift — along with technology partners including Rhino Health and Capgemini — to develop a secure, privacy-preserving solution for financial institutions to combat fraud. This innovative approach uses federated learning techniques, combined with privacy-enhancing technologies (PETs), to enable collaborative intelligence without compromising proprietary data.

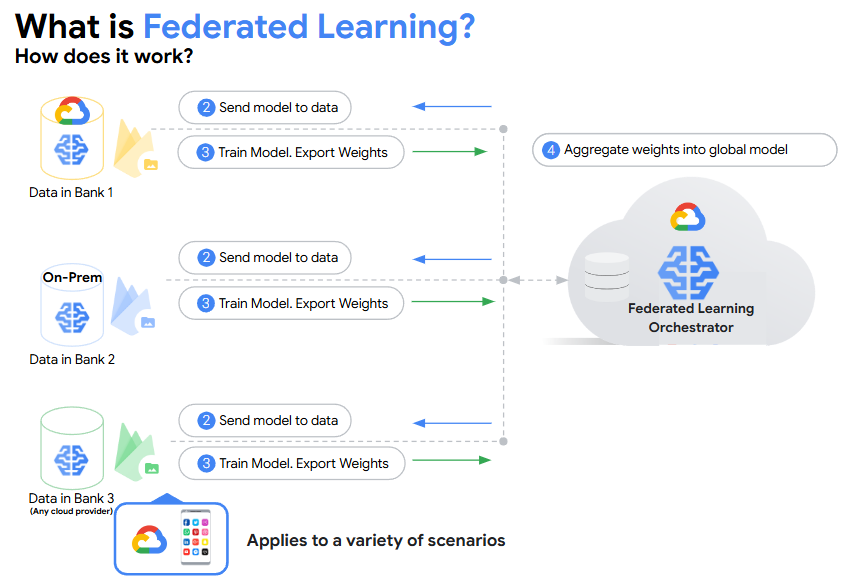

Here’s how it works for Swift:

1. A copy of Swift’s anomaly detection model is sent to each participating bank.

2. Each financial institution trains this model locally on their own data.

3. Only the learnings from this training — not the data itself — are transmitted back to a central server for aggregation, managed by Swift.

4. The central server aggregates these learnings to enhance Swift’s global model.

This approach significantly minimizes data movement and ensures that sensitive information remains within each financial institution's secure environment.

By using a Secure Aggregation( Biological Behaviour Algorithm and Federated Learning Technique) protocol to compute these weighted averages would ensure that the server learns only the historic fraud labels from participating financial institutions, but not exactly which financial institution, thereby preserving the privacy of each participant in the federated learning process.

Core Benefits of Federated Learning in Finance

· Shared intelligence: Institutions pool fraud data without sharing raw records.

· Better detection: Collaborative models catch complex schemes.

· Fewer false positives: More accurate fraud flags, smoother customer experience.

· Faster response: Models adapt quickly to new threats.

· Network effects: More participants = stronger protection.

Seamless integration with existing systems ensures easy adoption.

Major US banks are increasingly turning to Federated Learning (FL) to enhance their security without compromising customer privacy. This approach allows banks to collaborate and build more robust fraud detection models by sharing model updates, not raw, sensitive customer data.

The lack of visibility across the payment lifecycle creates vulnerabilities that can be exploited by criminals. A collaborative approach to fraud modeling offers significant advantages over traditional methods in combating financial crimes. To be effective, this approach requires data sharing across institutions, which is often restricted because of privacy concerns, regulatory requirements, and intellectual property considerations.

While traditional authentication methods like PINs and Face ID are still common, a new layer of security is emerging: passive, continuous monitoring. This technique analyzes a customer's unique behavioral patterns—such as how they type, use a mouse, or hold their phone—in real time.

If a transaction or login attempt deviates from this established behavior, the system can flag it as suspicious and require additional verification, all without interrupting the user's experience.

We’re seeing more enterprises adopt federated learning to combat global fraud, including global consulting firm Capgemini.

“Payment fraud stands as one of the greatest threats that undermines the integrity and stability of the financial ecosystem, with its impact acutely felt upon some of the most vulnerable segments of our society,” said Sudhir Pai, chief technology and innovation officer, Financial Services, Capgemini.

Federated Learning allows multiple organizations to collaboratively train a machine learning model without sharing their raw data. For banks, this is a significant advantage because it addresses two major concerns: data privacy and compliance with regulations.

Cross-silo Federated Learning (FL) lets banks co-train fraud/AML models without moving raw data off-prem, reducing cloud concentration and third-party risk while boosting detection accuracy. Large FIs and networks are already piloting FL for anomaly detection and fincrime.

● Fraud Detection: Banks can work together to train a more effective fraud detection model by sharing only the model updates, not the sensitive transaction data itself. This allows the model to learn from a broader range of fraud patterns across different institutions, improving accuracy and the ability to detect new, sophisticated fraud schemes.

● Anti-Money Laundering (AML): FL can enhance AML efforts by enabling banks to collectively identify suspicious transaction patterns that might span multiple institutions, which are often missed by traditional, siloed systems.

● Credit Risk Assessment: Financial institutions can use FL to build more robust credit risk models by leveraging data from different sources (e.g., banks, credit unions, and fintech companies) without compromising customer privacy.

Data never leaves bank premises. FL ships model updates (gradients/weights), not transactions or PII. That reduces breach blast-radius and discovery/extraterritorial issues.

Collaborative lift. Cross-bank signals catch novel fraud/AML patterns (mules, synthetic IDs, scams) that a single book can’t see. Major card and payment networks have published FL methods for privacy-preserving anomaly detection

Premium US banks are leading federated learning (FL) adoption for secure AI in financial services:

· JPMorgan Chase & Capital One use FL-like methods for AML, fraud detection, and credit risk, keeping data on-premises while training decentralized models.

· Citibank & HSBC (US) deploy FL in anti-financial crime and fraud prevention, with Citi leveraging FinRegLab’s AML pilots and HSBC partnering with Google Cloud and Swift.

NVIDIA supports banks with FL tools for money-laundering detection: Federated learning with NVIDIA FLARE enhances detection capabilities by enabling financial institutions to collaboratively train a global model without centralizing data. Each bank trains the model locally, maintaining privacy while improving the system's ability to detect complex money laundering patterns.

Industry-Wide Adoption: The consensus among financial technology experts is that while large-scale, full-blown adoption is still in its early stages, many premium banks and fintech companies are either conducting pilot programs or investing in research and development. The main drivers are the need for more accurate AI models and the rising cost and risk associated with data centralization.

Role of Tech Providers: The adoption of FL in banking is often facilitated by third-party technology providers and open-source frameworks. Companies like NVIDIA, Intel (with OpenFL), and Google (with TensorFlow Federated) offer frameworks that enable banks to implement FL solutions. The use of these platforms suggests that banks are building their own FL capabilities rather than relying on an off-the-shelf product.

Introduction of Biological Behavior Algorithms for Authentication from FaceOff AI ( FO AI )

The second part of the user's prompt mentions the use of "Biological Behaviour Algorithms" for securing transactions. This refers to behavioral biometrics, which is a growing field in banking security. Instead of relying solely on physical traits like PIN Number, fingerprints or facial scans, behavioral biometrics analyzes a user's unique patterns of behavior.

Authentication & Security: The system creates a unique behavioral profile for each user. If a transaction or login attempt deviates from this profile, even if a password or physical biometric is correct, the system can flag it as suspicious and require an additional layer of authentication. This provides continuous, real-time security that goes beyond a one-time login check.

Synergy with FL: When combined with Federated Learning, behavioral biometric data can be used to improve security models. For example, banks could collaboratively train a model to detect fraud based on collective behavioral patterns without sharing raw customer data. This allows each bank's model to become more effective at identifying new fraud tactics and anomalies.

Challenges include technical complexity (e.g., heterogeneous data formats) and communication overhead, but solutions like secure aggregation protocols are emerging. Overall, FL adoption is projected to grow, with benefits like 38% improved model generalizability seen in similar sectors.

Integrating behavioral biometrics ensures secure, frictionless transactions, combating fraud effectively. This dual approach not only complies with regulations but also builds trust, paving the way for a more resilient banking ecosystem.

This approach addresses growing concerns over data privacy and security, particularly in the banking sector, where regulations like the Bank Secrecy Act (BSA) and Anti-Money Laundering (AML) requirements demand robust protections.

US banks are increasingly adopting FL to mitigate risks associated with cloud-based data storage, such as breaches, regulatory non-compliance, and operational vulnerabilities.

It’s time to build a safer financial ecosystem—where collaborative federated learning, rooted in privacy, security, and scalability, transforms fraud prevention and fosters a more resilient, trustworthy, and secure global system.

In summary, by combining Federated Learning with behavioral biometrics, banks can create a powerful, multi-layered defense system that is constantly learning and adapting to new threats, far exceeding the security capabilities of older, siloed systems.

For more information, pls. write to deepak@faceoff.world

See What’s Next in Tech With the Fast Forward Newsletter

Tweets From @varindiamag

Nothing to see here - yet

When they Tweet, their Tweets will show up here.